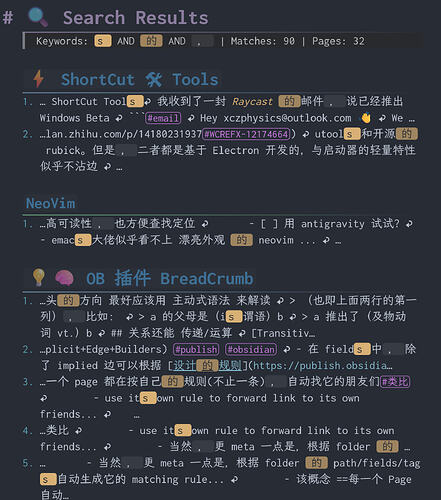

Missing the previous full-text search, so extended this script — now largely transformed.

- Multi-keyword/phrase search with distinct highlights.

- Header cues showing the folder hierarchy of matched pages (with optional folder tree).

- Hit counting with ordered lists.

- configurable contextLength for multi-phrases…

```space-lua

-- ============================================

-- Configuration

-- ============================================

local config = {

showParentHeaders = false,

contextLength = 50 -- size of context window (for multi-)

}

-- Highlight styles for different keywords (recycling)

local highlightStyles = {

function(kw) return "==" .. kw .. "==" end,

function(kw) return "==`" .. kw .. "`==" end,

function(kw) return "`" .. kw .. "`" end,

function(kw) return "**==" .. kw .. "==**" end,

function(kw) return "**`" .. kw .. "`**" end,

function(kw) return "*==`" .. kw .. "`==*" end,

function(kw) return "*`" .. kw .. "`*" end,

function(kw) return "**" .. kw .. "**" end,

function(kw) return "*" .. kw .. "*" end,

function(kw) return "*==" .. kw .. "==*" end,

}

-- Clean context string (remove newlines)

local function cleanContext(s)

if not s then return "" end

return string.gsub(s, "[\r\n]+", " ↩ ")

end

-- Generate heading prefix based on depth

local function headingPrefix(depth)

if depth > 6 then depth = 6 end

return string.rep("#", depth) .. " "

end

-- Build hierarchical headers for a page path

local function buildHierarchicalHeaders(pageName, existingPaths)

local output = {}

local parts = {}

for part in string.gmatch(pageName, "[^/]+") do

table.insert(parts, part)

end

local currentPath = ""

for i, part in ipairs(parts) do

if i > 1 then

currentPath = currentPath .. "/"

end

currentPath = currentPath .. part

if not existingPaths[currentPath] then

existingPaths[currentPath] = true

if i == #parts then

table.insert(output, headingPrefix(i) .. "[[" .. pageName .. "]]")

elseif config.showParentHeaders then

table.insert(output, headingPrefix(i) .. part)

end

end

end

return output

end

-- Parse keywords from input

-- Supports: `phrase with spaces` as single keyword, space separates others

local function parseKeywords(input)

local keywords = {}

local i = 1

local len = #input

while i <= len do

-- Skip whitespace

while i <= len and string.sub(input, i, i):match("%s") do

i = i + 1

end

if i > len then break end

local char = string.sub(input, i, i)

if char == "`" then

-- Find closing backtick

local closePos = string.find(input, "`", i + 1, true)

if closePos then

local phrase = string.sub(input, i + 1, closePos - 1)

if phrase ~= "" then

table.insert(keywords, phrase)

end

i = closePos + 1

else

-- No closing backtick, treat rest as keyword

local phrase = string.sub(input, i + 1)

if phrase ~= "" then

table.insert(keywords, phrase)

end

break

end

else

-- Regular word until whitespace or backtick

local wordEnd = i

while wordEnd <= len do

local c = string.sub(input, wordEnd, wordEnd)

if c:match("%s") or c == "`" then

break

end

wordEnd = wordEnd + 1

end

local word = string.sub(input, i, wordEnd - 1)

if word ~= "" then

table.insert(keywords, word)

end

i = wordEnd

end

end

return keywords

end

-- Find all positions of a keyword in content (plain text search)

local function findAllPositions(content, keyword)

local positions = {}

local start = 1

while true do

local pos = string.find(content, keyword, start, true)

if not pos then break end

table.insert(positions, {

pos = pos,

endPos = pos + #keyword - 1,

keyword = keyword

})

start = pos + 1

end

return positions

end

-- For single keyword: find all occurrences with context

local function findSingleKeywordMatches(content, keyword, ctxLen)

local matches = {}

local positions = findAllPositions(content, keyword)

local contentLen = #content

for _, p in ipairs(positions) do

local prefixStart = math.max(1, p.pos - ctxLen)

local suffixEnd = math.min(contentLen, p.endPos + ctxLen)

local prefix = cleanContext(string.sub(content, prefixStart, p.pos - 1))

local suffix = cleanContext(string.sub(content, p.endPos + 1, suffixEnd))

table.insert(matches, {

prefix = prefix,

suffix = suffix,

keyword = keyword

})

end

return matches

end

-- For multiple keywords: find contexts where ALL keywords appear within window

local function findMultiKeywordMatches(content, keywords, ctxLen)

local matches = {}

local contentLen = #content

-- Get all positions for first keyword

local firstKeywordPositions = findAllPositions(content, keywords[1])

for _, anchor in ipairs(firstKeywordPositions) do

-- Define search window around this anchor

local windowStart = math.max(1, anchor.pos - ctxLen)

local windowEnd = math.min(contentLen, anchor.endPos + ctxLen)

local window = string.sub(content, windowStart, windowEnd)

-- Check if all other keywords exist in this window

local allFound = true

local keywordPositionsInWindow = {}

-- Add first keyword position (relative to window)

table.insert(keywordPositionsInWindow, {

keyword = keywords[1],

keywordIndex = 1,

relPos = anchor.pos - windowStart + 1,

len = #keywords[1]

})

for i = 2, #keywords do

local kw = keywords[i]

local relPos = string.find(window, kw, 1, true)

if not relPos then

allFound = false

break

end

table.insert(keywordPositionsInWindow, {

keyword = kw,

keywordIndex = i,

relPos = relPos,

len = #kw

})

end

if allFound then

-- Build highlighted snippet

-- Sort by position descending for safe replacement

table.sort(keywordPositionsInWindow, function(a, b)

return a.relPos > b.relPos

end)

local snippet = cleanContext(window)

-- Recalculate positions after cleaning (newlines become spaces)

-- Re-find each keyword in cleaned snippet

local cleanedPositions = {}

for _, kp in ipairs(keywordPositionsInWindow) do

local pos = string.find(snippet, kp.keyword, 1, true)

if pos then

table.insert(cleanedPositions, {

keyword = kp.keyword,

keywordIndex = kp.keywordIndex,

relPos = pos,

len = kp.len

})

end

end

-- Sort descending and apply highlights

table.sort(cleanedPositions, function(a, b)

return a.relPos > b.relPos

end)

for _, p in ipairs(cleanedPositions) do

local styleIndex = ((p.keywordIndex - 1) % #highlightStyles) + 1

local highlightFn = highlightStyles[styleIndex]

local before = string.sub(snippet, 1, p.relPos - 1)

local after = string.sub(snippet, p.relPos + p.len)

snippet = before .. highlightFn(p.keyword) .. after

end

table.insert(matches, { snippet = snippet })

end

end

return matches

end

-- Core search function

local function searchGlobalOptimized(keywordInput)

if not keywordInput or keywordInput == "" then

return nil, 0, 0, {}

end

local keywords = parseKeywords(keywordInput)

if #keywords == 0 then

return nil, 0, 0, {}

end

local results = {}

local matchCount = 0

local pageCount = 0

local ctxLen = config.contextLength

local pages = space.listPages()

local existingPaths = {}

for _, page in ipairs(pages) do

if not string.find(page.name, "^search:") then

local content = space.readPage(page.name)

if content then

local pageMatches = {}

if #keywords == 1 then

-- Single keyword search

local kw = keywords[1]

if string.find(content, kw, 1, true) then

local singleMatches = findSingleKeywordMatches(content, kw, ctxLen)

for i, m in ipairs(singleMatches) do

local styleIndex = 1

local highlightFn = highlightStyles[styleIndex]

local formatted = string.format(

"%d. …%s%s%s…",

i,

m.prefix,

highlightFn(m.keyword),

m.suffix

)

table.insert(pageMatches, formatted)

end

end

else

-- Multi-keyword AND search within context window

local multiMatches = findMultiKeywordMatches(content, keywords, ctxLen)

for i, m in ipairs(multiMatches) do

local formatted = string.format("%d. …%s…", i, m.snippet)

table.insert(pageMatches, formatted)

end

end

if #pageMatches > 0 then

pageCount = pageCount + 1

local headers = buildHierarchicalHeaders(page.name, existingPaths)

for _, header in ipairs(headers) do

table.insert(results, header)

end

for _, match in ipairs(pageMatches) do

table.insert(results, match)

matchCount = matchCount + 1

end

table.insert(results, "")

end

end

end

end

return results, matchCount, pageCount, keywords

end

-- Build keyword legend for header

local function buildKeywordLegend(keywords)

local parts = {}

for i, kw in ipairs(keywords) do

local styleIndex = ((i - 1) % #highlightStyles) + 1

local highlightFn = highlightStyles[styleIndex]

table.insert(parts, highlightFn(kw))

end

return table.concat(parts, " AND ")

end

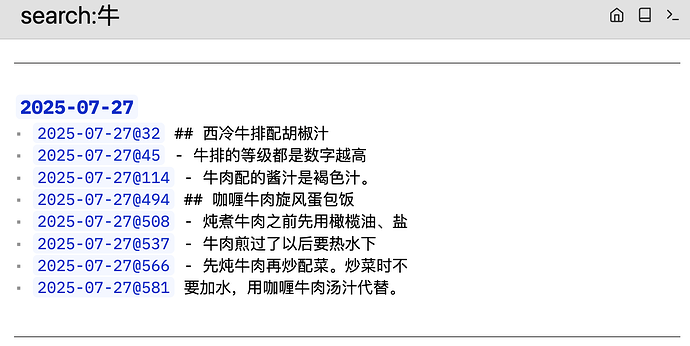

-- Virtual Page: search:keyword

virtualPage.define {

pattern = "search:(.+)",

run = function(keywordInput)

keywordInput = keywordInput:trim()

if not keywordInput or keywordInput == "" then

return [[

# ⚠️ Search Error

Please provide search keywords.

**Usage:**

- `search:keyword` - single keyword

- `search:word1 word2` - AND logic (both must appear within context window)

- `search:`phrase with spaces`` - backticks for exact phrase

]]

end

local results, matchCount, pageCount, keywords = searchGlobalOptimized(keywordInput)

local output = {}

table.insert(output, "# 🔍 Search Results")

local legend = buildKeywordLegend(keywords)

table.insert(output, string.format(

"> Keywords: %s | Matches: %d | Pages: %d",

legend, matchCount, pageCount

))

if matchCount == 0 then

table.insert(output, "")

table.insert(output, "😔 **No results found**")

table.insert(output, "")

table.insert(output, "Suggestions:")

table.insert(output, "1. Check spelling")

table.insert(output, "2. Try fewer keywords")

table.insert(output, "3. Keywords are case-sensitive")

if #keywords > 1 then

table.insert(output, "4. All keywords must appear within " .. config.contextLength .. " characters of each other")

end

else

table.insert(output, "")

for _, line in ipairs(results) do

table.insert(output, line)

end

end

return table.concat(output, "\n")

end

}

-- Command: Global Search

command.define {

name = "Global Search",

run = function()

local keyword = editor.prompt("🔍 Search (space=AND, phrase=`a b`)", "")

if keyword and keyword:trim() ~= "" then

editor.navigate("search:" .. keyword:trim())

end

end,

key = "Ctrl-Shift-f",

mac = "Cmd-Shift-f",

priority = 1,

}

For multi-keyword search, ugrep offers keyword AND comparing to ripgrep, and potentially faster on AND pattern (not verified).

I previously built a Sublime Search plugin. Ripgrep was initially slow and unfriendly to AND logic; switching to ugrep worked much better.

Just some notes for those seeking faster search performance.

Customize freely!